Most AI products rely on web search for present-day information—a costly, manual, reactive process.

Break The Web changes that by streaming real-time current event knowledge directly into AI workflows, keeping your product automatically in sync with the world.

No web search required.

CT-X, a real-time data ingestion algorithm that transforms the raw noise of the internet into a continuously updating knowledge base of current events, ready to be embedded seamlessly into any AI workflow.

A continuously updating, highly structured knowledge graph of the present that LLMs can reason over natively—no search plug-ins required. The missing infrastructure layer that bridges the gap between training cutoff and present day.

Cut infrastructure costs and speed up response times with an offline dataset that delivers faster, cheaper answers on trending topics. Upgrade your front-end with a dynamic real-time user experience that goes beyond the search box.

Keep your platform responsive and relevant with embedded real-time knowledge—for chatbots, voice assistants, avatars, news apps, analytics tools, and more.

Stay ahead of the conversation with live insights—ideal for teams creating timely, trend-aware content. Combine with internal data for greater impact.

Real-time knowledge flows into your product automatically—no user search required to trigger it.

Structured data is stored offline and continuously updated, eliminating search latency.

Fixed-cost access removes the need for expensive per-query search infrastructure.

Richer, more contextual outputs enabled by a dense knowledge graph of current event data.

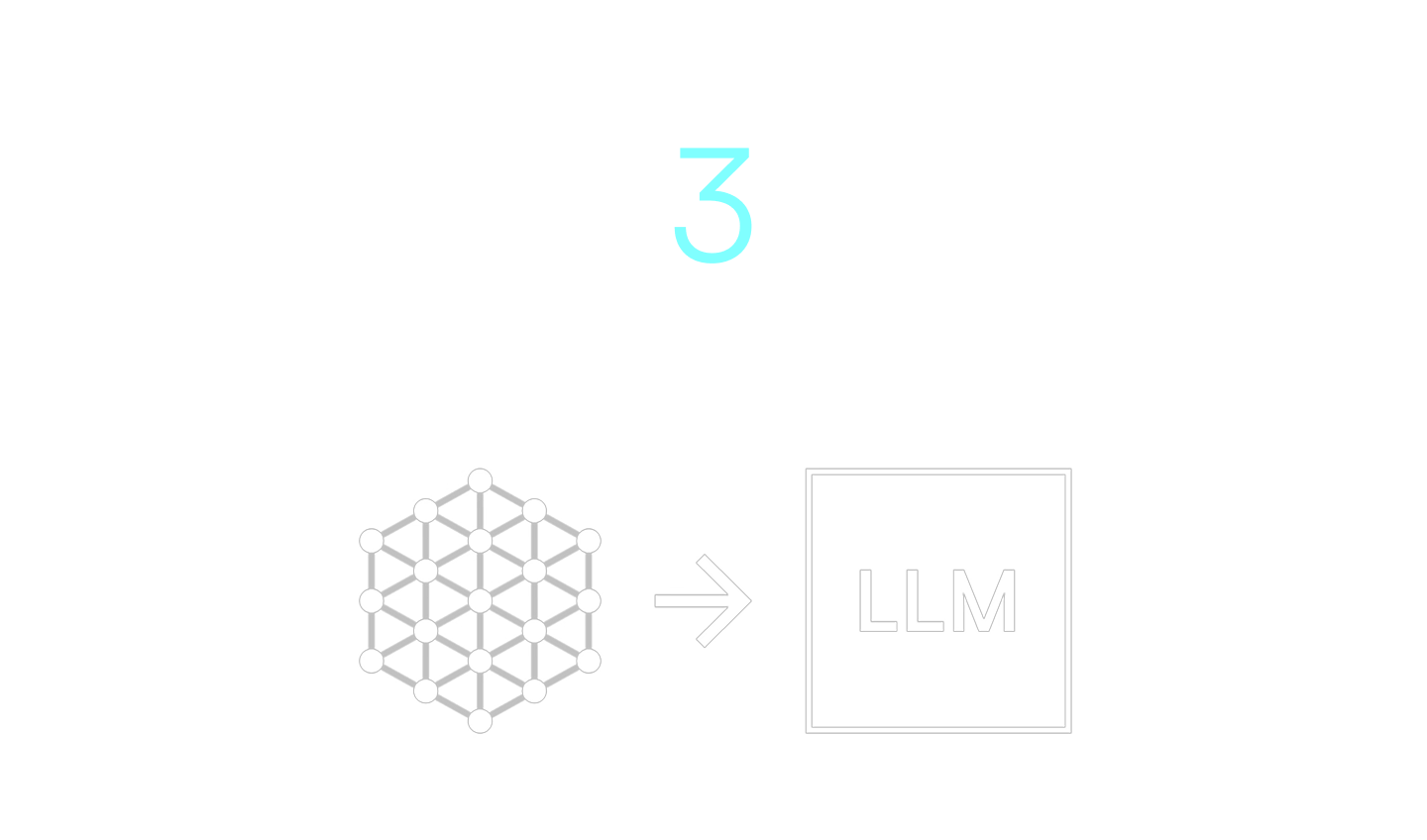

A three-step process that crawls the web in real time, converts raw data into structured knowledge packets, and deploys each packet directly into AI workflows.

A custom-built crawler scans thousands of sites in real time, collecting publicly available metadata into a large, unstructured dataset—just like what a search engine does.

Instead of waiting for a user query, our proprietary algorithm automatically clusters all this raw data by topic and virality in real time. What was once disorganized and unactionable data is now clean, categorized, and ready to use.

Each topic cluster is converted into a supplemental knowledge “packet” and delivered directly into your product’s workflow. These packets can be used in an almost infinite number of ways, depending on the use case.